By Stephen DeAngelis

Three years ago, a Fortune 500 manufacturer carefully delegated an annual operating plan for an important function of the business to an autonomous machine. Not the spreadsheet work. Rather, the complex work of understanding the decision space, weighing competitor actions, absorbing external events, and optimizing thousands of individual choices into a single coherent plan.

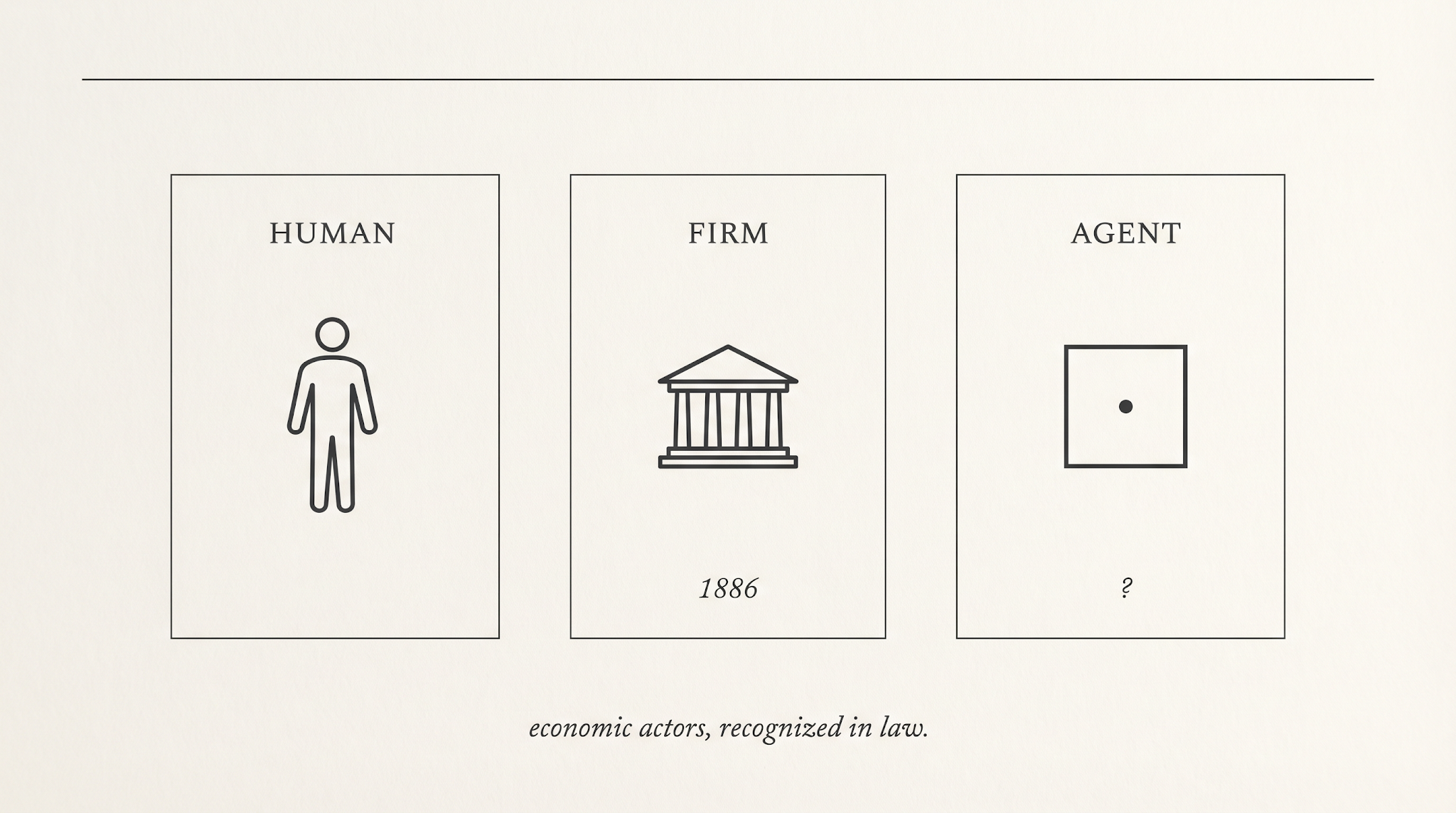

The corporation was the first economic actor we built that had no body, no soul, and no need to sleep. We are now building the second.

Senior leadership delegated a defined set of decision rights to an autonomous enterprise system, the kind of work that had previously belonged to a team of experienced humans. I have watched this happen in some of the world’s leading companies, in rooms where the decision was made carefully, by people who knew what they were authorizing. Most of the system’s recommendations were accepted automatically. A subset were reviewed by humans, selectively, by exception, or by sample.

Nothing dramatic happened. The plan landed. Revenue grew. Profits were realized. Competitors were outmaneuvered. Margins held or widened. From the outside, the company looked like it had bought another piece of enterprise software and made it work.

From the inside, something else happened. A class of decisions that had always belonged to people now belonged, in the operating sense, to a system. Inside its defined scope, it ran on its own clock, pursued the corporate objectives it had been given, and produced consequences the firm had to live with.

By the time the plan had landed, the system was pacing the cadence of the firm, not following it. The vocabulary we used for these systems no longer described what they were doing.

What the second actor unlocks

The upside is the reason serious leaders are doing this at all, and it deserves to be named first.

A firm whose operating decisions live partly inside autonomous systems handles uncertainty on a different timescale. Conditions change at ten in the morning. The plan adjusts by noon. A supplier disruption, a demand signal, a price move in a downstream input. The firm absorbs each one at the speed of the market rather than the speed of the next planning cycle. The annual operating plan stops being an artifact and becomes a continuous posture. Coherence with the corporate objective is held across thousands of small decisions a day, in a way no human team can sustain.

That is the prize. A firm that re-plans as the world changes, decides at the speed of its markets, and holds strategy and execution in alignment across thousands of small choices a day. Not a marginal efficiency gain. A different operating model.

The market has already begun to price the distinction, and the number is large.

The market has noticed

Between January and March of this year, the enterprise software sector lost roughly two trillion dollars in market capitalization, the largest non-recessionary 12-month drawdown in more than three decades. The software sector’s weight in the S&P 500 fell from twelve percent to under nine in three months. The companies hit hardest were not poorly run. They were the canonical names of the previous era. ServiceNow. Salesforce. Workday. Adobe. Atlassian. Their problem was not execution. Their problem was that the per-seat license, the dominant pricing model of enterprise software for two decades, had quietly assumed a human at every seat.

I have argued elsewhere that the divergence between traditional software and AI-native systems, and between GenAI-only architectures and multi-engine platforms, is reshaping the enterprise software industry. The point I want to make here is narrower. When the agent does the work, the seat is empty. When the seat is empty, the license is a line item that no longer maps to anything real. Investors saw this faster than most boards did. The repricing was not a mood swing. It was Darwinian. The business model of traditional software, and the operating model of the firms that bought it, had both been built for a world in which a human remained the unit of work.

The two-trillion-dollar repricing is the public, financial signal that the tool frame is breaking. The market knows. The vocabulary has not caught up.

What the metaphor is doing

Language carries policy. The word tool, applied to a system, drags an entire infrastructure of assumptions behind it. A tool is held by a human. A tool extends human will. A tool is the medium of action, never its source. When something goes wrong with a tool, we ask what the human did with it, never what the tool decided.

That assumption is the spine of how we regulate, contract, insure, audit, and manage these systems. Procurement frameworks treat them as software licenses. Liability regimes treat them as products. Audit committees treat them as controls. Insurance carriers treat them as fixed assets. Boards treat them as line items in a technology plan. Each of these treatments is coherent only if a human remains the agent of action.

When humans step back, the entire stack of procurement, liability, audit, insurance, and board treatment quietly stops describing what is in the room.

Where the frame cracks

Three pressures, all already present in production environments, push past the tool frame.

The first is goal persistence and coherence. A wrench does not want anything between uses. A modern decision system holds objectives across days, weeks, planning cycles. It persistently returns each morning to a state and a target it was given and continues. Coherently maximizing the objective function across timescales, without continuous human direction, is a property of agents, not of tools.

The second is resource allocation. These systems do not merely advise. They move budget, inventory, capacity, attention, and increasingly money. Even in advisory mode, when a recommendation is implemented at scale by default and reviewed only by exception, the system is allocating resources. Reviewing in aggregate is not the same kind of decision as approving each one. The vocabulary of approval has become the vocabulary of audit.

The third is machine time. Markets, regulators, courts, and boards run on human time. Quarters. Annual audits. Multi-year cases. Decadal regulatory cycles. Agent-to-agent commerce, already running in procurement, treasury, logistics, and pricing, moves in milliseconds. The mismatch is not an inconvenience. It is a structural defect in the institutions we rely on to keep commerce honest.

Each of these, on its own, can be managed within the tool frame by adding a footnote. Together, they require a different category.

The first non-human actor

We have done this before. Once.

The argument that follows sits inside a recognizable tradition. Ronald Coase asked in 1937 why firms exist at all rather than every transaction running through the market and answered that the firm exists where coordination inside an entity is cheaper than coordination through prices. Oliver Williamson extended that insight into a theory of transaction costs and the boundaries of the firm. Margaret Blair, working closer to the present, showed that the corporation is best understood as a legal device for holding the joint output of a team whose members cannot easily contract with each other one by one. Each of these scholars was studying the same question from a different angle. What kind of entity is a firm, and why did we have to build it?

The corporation, as a legal person capable of holding property, signing contracts, and bearing liability in its own name, is a 19th-century invention. The American shape of it was assembled in fragments. Dartmouth College in 1819 gave the corporation a constitutional protection of its charter. Santa Clara County in 1886 carried into the headnote the proposition that the corporation was a person under the Fourteenth Amendment. A century of statutes, cases, and accounting conventions filled in the rest. By 1900, a manager could enter a building, sign a paper, and obligate an entity that could outlive its founders, contract in its own name, and bear consequences across generations.

What that required was not one invention. It was a stack of them, each addressing a problem the new actor created. Limited liability gave investors a defined locus of obligation, so that the firm itself, rather than every shareholder, was the entity that could be sued. Perpetual succession allowed the firm to outlive the people who started it, which meant the law had to settle what happened when officers and owners changed without changing the entity beneath them. Fiduciary duty fixed who owed what to whom inside the firm, so that managers acting in the firm’s name were held to a standard distinct from their personal interests. The audit profession was built, almost from nothing, to give outsiders a continuous account of what was happening inside an entity they could not enter. The corporate seal, and later the authorized signature, gave the firm a way to bind itself by contract without a human body to sign with.

Each of those inventions has a present-day analog the second actor will need. A defined locus of obligation for autonomous systems, so that loss has somewhere to land. A settled answer to what happens when an agent’s operator changes, or when the underlying model is retrained, and whether the entity persists across the change. A duty owed by agents, or by their operators, to the parties on the other side of agent-to-agent commerce. An audit regime that runs at machine time rather than at quarterly time. A cryptographic identity that lets one agent contract with another and lets a court later determine which entity bound itself to what. The corporation required a stack of named legal-institutional inventions to be workable. The second actor will require its own stack, and most of it has not been built.

The second actor is the second non-human entity we have built that holds goals, allocates resources, contracts with other systems, and produces consequences in its own name.

The corporation is the first and so far the only non-human entity we have built that is recognized, in law and in practice, as an economic actor in its own right. The institutions that surround it, including securities regulation, double-entry accounting, fiduciary duty, board governance, audit, bankruptcy, and antitrust, were not handed down. They were invented, often slowly, often after avoidable harm, to make the new actor workable inside a society that had been built for human actors only.

A superintelligent agent that coherently holds goals, allocates resources, contracts with other systems, and produces consequences without a human approving each step is not a tool with an upgrade. It is a candidate for the third box. Whether we put it there deliberately, or whether the courts and the markets put it there for us by accident, is the live question.

What the new category would require

It is worth being concrete about what the third box, treated seriously, would need.

It would need standing. Not necessarily personhood in the full corporate sense, but a defined locus of obligation. Today, when an autonomous system causes a loss, the question of who pays runs through a chain of operator, vendor, integrator, model provider, and customer that no one designed and no court has finished mapping.

It would need an audit regime adapted to machine time. Quarterly review of an entity that makes thousands of consequential decisions per second is not audit. It is archaeology. The institutions that watch firms will need to watch agents continuously or accept that they cannot watch them at all. What this implies for boards is more concrete than the abstract suggests, and I will return to it in the close.

It would need a transparent, auditable decision-making trail that runs from data to analytics to insights to recommendations to post-event analysis. A glass box, not a black one. This is the technical foundation everything else rests on. Without it, every claim of oversight is a claim of faith.

It would also need contracting infrastructure that recognizes agent-to-agent transactions as a distinct class, with their own evidentiary, settlement, and dispute mechanisms. Treating them as ordinary commercial transactions executed unusually fast is the path of least resistance and the path of greatest accumulated risk.

It would need a settled answer to the principal question of governance. When the principal can no longer fully understand what the agent did, every board, every regulator, and every executive needs a defensible answer to how oversight nonetheless occurs, which is precisely why the auditable decision trail above is not optional. Pretending the asymmetry does not exist, or that it can be resolved by asking the agent to explain itself, will not survive a serious adverse event.

The case for confidence

It would be easy, in 2026, to write a piece that ends in alarm, or one that ends in a call for restraint. I am going to do neither. The right response to the second actor is to build the institutions it will need, in advance of the harm, while the technology continues to grow. Governance and growth are not a trade. They are the same project.

The 19th century inherited the corporation without a plan and improvised an institutional response over a hundred years. We are inheriting the autonomous agent with vastly more institutional capacity, vastly better information, and the benefit of having watched what worked and what did not the first time.

We have the legal scholarship. We have the regulatory machinery. We have the glass-box technology to create an auditable decision-making trail. We have the operating experience and muscle memory accumulating now in firms like the Fortune 500 manufacturer at the opening of this piece, where these systems are already at work and the organization has not collapsed. It has accelerated.

Governance, not restraint

The temptation is to fear the agent and to call for a moratorium, a cap, a pause, a leash. That instinct mistakes restraint for governance. Restraint preserves the comfort of the old frame for a few more years and leaves the new actor unbuilt-for when it arrives at scale anyway. The agent does not stop growing because a committee has asked it to wait.

Governance is the harder discipline. It is the work of letting the actor grow while building the standing, the audit, the contracts, and the liability rules it will operate inside. The generation that wrote the corporation into the institutional record in 1819 and 1886 was not asked to stop the corporation. It was asked to make the corporation workable. It did so imperfectly, and the imperfections cost real people real harm. We can build the institutions of the second actor in years rather than centuries, with the textbook of the first one open on the desk.

The work in front of us is not technical. The technology is arriving on its own schedule, and no executive in the room has the option of slowing it down. The work is conceptual and institutional. It begins with the willingness to stop calling these systems tools when they have stopped behaving as tools, and to start building the standing, the audit regime, the contracting infrastructure, and the liability rules the second actor will operate inside, while it grows, not after the first serious loss.

The first-mover question

The defensive framing misses a further turn. Boards that build the institutional scaffolding for the second actor first will not just avoid liability when the first serious loss arrives. They will compound competitive advantage from the work.

The pattern is recent enough to remember. The firms that built IT audit committees in 1998, that put control frameworks around their digital systems before regulators required it, went on to operate digital businesses through the 2000s with a confidence the laggards lacked. The laggards spent the 2010s catching up, often under regulatory pressure, often after a public incident, almost always at higher cost than if they had moved earlier. The same pattern is available now to the boards that move first on autonomous systems.

The institutional-building work is a moat, not a cost. A firm whose autonomous decisions are auditable, whose agent contracts are enforceable, and whose liability is mapped can deploy these systems into territory the laggards cannot enter. Boards that understand this will treat the build as strategic rather than as compliance. Boards that do not will find the work waiting for them later, on someone else’s schedule, at someone else’s price.

Concretely. Every board without an autonomous-systems audit subcommittee by the end of 2027 will be in the position the boards without IT audit committees were in 1998, with the same predictable cost when the first serious loss arrives. That is a falsifiable claim. I am willing to be wrong about the date. I am not willing to be wrong about the direction.

The scaffolding will not build itself. The human mind, handed a clear problem, has built scaffolding like this before. The first non-human actor took us a hundred years to make workable. The second one does not have to.

Footnotes

1. J.P. Morgan analysis cited in Fortune, February 2026, fortune.com/2026/02/16/trillion-dollar-ai-market-wipeout-investors-bet-winner. The largest non-recessionary 12-month softwaredrawdown in over 30 years, with the sector’s weight in the S&P 500 fallingfrom 12.0% to 8.4%.

2.See Stephen F. DeAngelis, TheTwo Divides Reshaping Enterprise Software, DeAngelisReview, April 2026, onthe AI Divide between traditional and AI-native platforms and the Cost Dividebetween GenAI-only and multi-engine architectures.

Share this post